Most organizations treat AI as a purchase decision rather than an architectural one. They buy tools, run pilots, and wonder why results don’t compound.

At Ailudus, we’ve observed that AI as a business instrument only generates sustainable returns when embedded within disciplined operating systems. The difference between temporary productivity gains and structural competitive advantage lies in how you design the system around the technology, not the technology itself.

AI as Structural Component

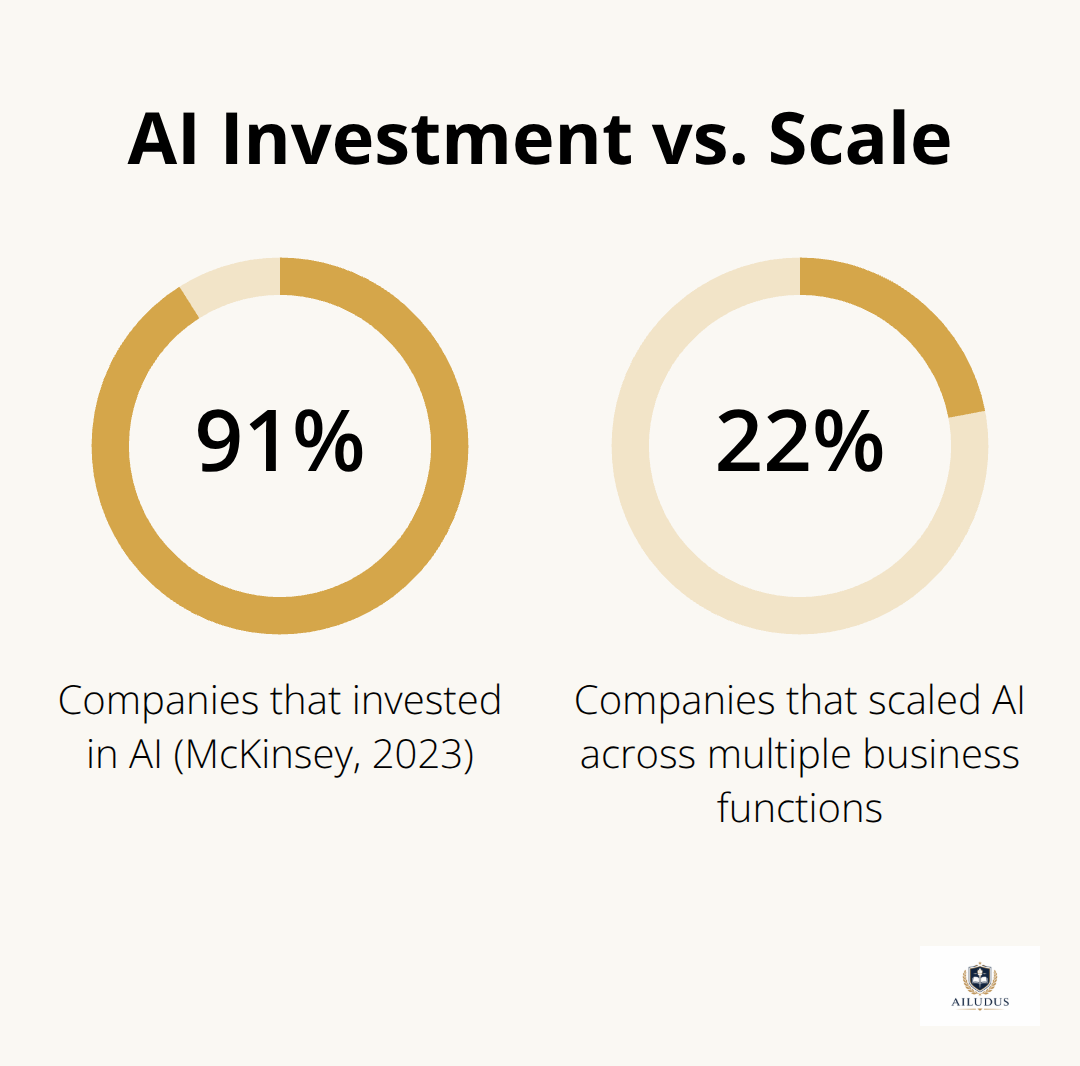

The gap between AI pilots and production systems is not a technology problem. McKinsey’s 2023 research found that 91% of companies invested in AI, yet only 22% scaled it across multiple business functions. This gap exists because most organizations conflate tool adoption with system design.

They acquire capability without building the operating structure that allows that capability to compound.

Consider how Netflix operates. The company processes petabytes of data daily while maintaining efficient content library management and delivering personalized recommendations across 190+ countries. This scale emerges not from better algorithms alone. Netflix built an integrated infrastructure where data flows from viewer interactions through recommendation engines to personalized content presentation-each component reinforces the others. The system itself generates leverage. When Netflix deploys a new recommendation algorithm, the infrastructure absorbs it without disruption. When Uber processes billions of data points daily for demand forecasting and driver-rider matching, the system’s value comes from how data, decision logic, and execution bind together, not from isolated analytical capability. Without that binding structure, even sophisticated AI produces temporary productivity spikes that fade once novelty wears off.

Framework Precedes Tools

Most implementation failures trace to inverting this sequence. Organizations select tools first, then attempt to build systems around them. This approach guarantees inefficiency. The tool shapes decisions rather than business requirements shaping tool selection. A company might adopt a particular machine learning platform because it’s popular, then spend months retrofitting data pipelines and governance structures to match the platform’s architecture. The platform becomes the constraint. Disciplined operators reverse this. They define their operating leverage points-where AI decision-making creates measurable business impact-then structure data flows and decision hierarchies to support those points, then select instruments that fit the structure. This sequence demands more upfront work but eliminates rework and vendor dependency later.

Systems That Remain Under Your Control

The sustainability question separates long-term competitive advantage from temporary capability. When AI exists in isolation-a machine learning model trained by a specialist, deployed in a sandbox, monitored sporadically-it degrades. Model drift occurs. Data quality shifts. Performance decays. The system fails silently until someone notices. Organizations with sustainable AI embed it within operating systems that include continuous data governance, performance monitoring, and defined override mechanisms. This means designing decision hierarchies where automated outputs feed into human judgment systematically, not as an afterthought. It means establishing clear data ownership and access controls from day one, not retrofitting them after a breach. It means treating AI infrastructure as operational infrastructure-subject to the same version control, testing, and change management discipline applied to production systems. This is not overhead. This is what separates AI that compounds from AI that decays.

Moving From Structure to Leverage

The foundation is now in place. You understand that AI functions within systems, not in isolation. You recognize that framework precedes tools and that control requires discipline. The next step moves from structural thinking to operational execution: identifying where AI actually creates leverage within your business, and how to structure data flows and decision hierarchies to capture that leverage systematically.

Where AI Creates Measurable Leverage

Identifying Leverage Points With Specificity

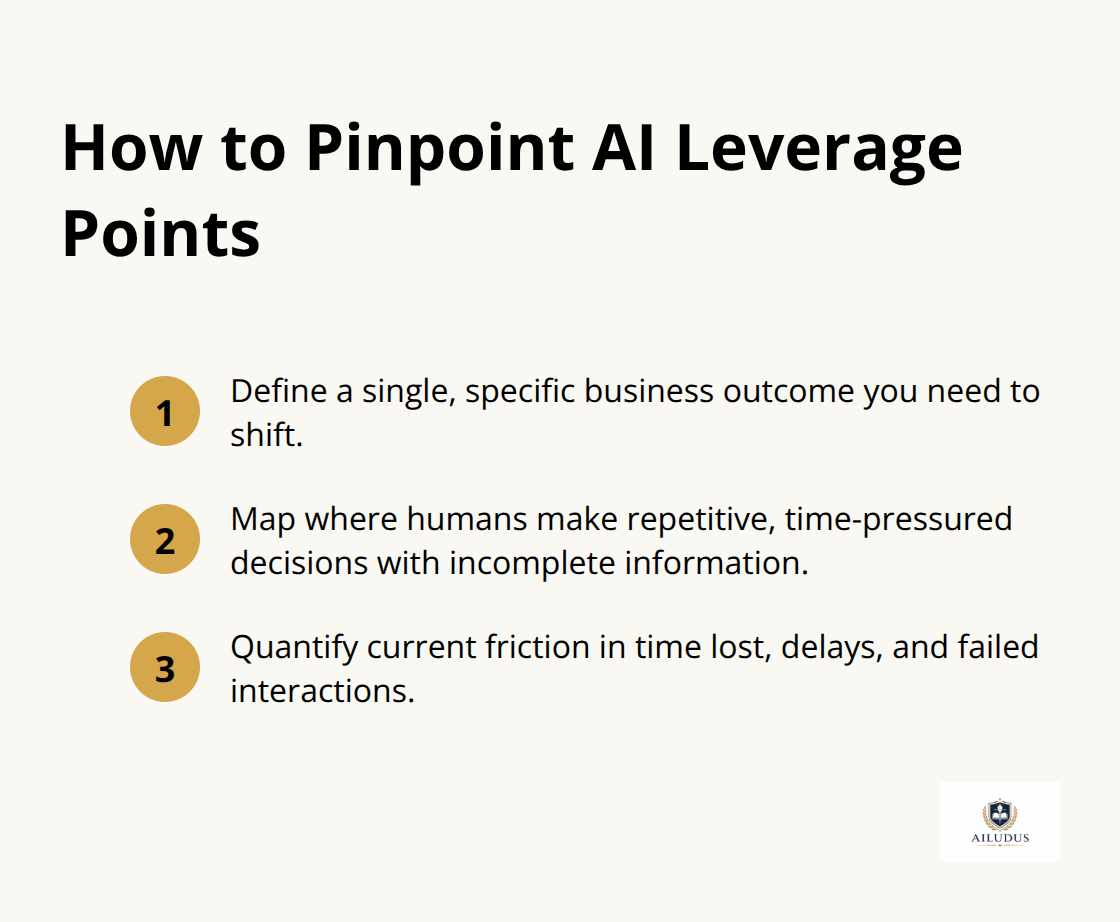

Identifying leverage points requires ruthless specificity. Most organizations describe their AI ambitions in abstractions: improve customer experience, optimize operations, accelerate decision-making. These statements obscure the actual work. Leverage emerges at precise intersections where automated decision-making compresses time, reduces manual processing, or eliminates information bottlenecks that currently constrain revenue or cost.

Start by mapping where humans currently make repetitive decisions with incomplete information under time pressure. Uber’s demand forecasting system processes billions of data points daily to solve one concrete problem: matching driver supply to rider demand in real time. That specificity allowed Uber to structure data flows around a single, measurable outcome. Google Ads applies AI-driven personalization to analyze search queries and user behavior in real time, targeting one leverage point: relevance of ad placement. These systems work because they were built to solve defined problems, not because the AI itself is sophisticated.

The first step is brutal honesty about which specific business outcome you want to shift. Not improve-shift. Measure the current state: how many hours do your teams spend on manual analysis, how many decisions get delayed waiting for data, how many customer interactions fail because information arrives too late. Quantify the friction.

That friction point is your leverage opportunity.

Structuring Data and Decision Flows

Once you identify the leverage point, structure everything around capturing it. Data must flow from source to decision point without intermediaries or delay. Decision hierarchies must route outputs to the people or systems that act on them immediately. Override mechanisms must exist but remain rare enough that they signal when the automated logic fails systematically.

This is where most organizations stumble. They build the AI component brilliantly but leave data pipelines fragmented, decision ownership ambiguous, and feedback loops disconnected. The result is elegant analysis that nobody acts on. At scale, this failure becomes expensive quickly. When you deploy AI across multiple functions, cost per model matters. Infrastructure costs, ongoing monitoring, retraining cycles, and staff time compound.

Total cost of ownership for AI systems extends far beyond initial deployment. Model maintenance represents 15-30% of total AI TCO through continuous retraining, performance monitoring, and drift detection systems. This means your leverage calculation must account for the full operational burden, not just the analytical benefit. A system that saves one hour of manual work per week but requires four hours of monitoring and maintenance per week destroys leverage rather than creates it.

Building Repeatable Architecture

Structure for repeatability from the beginning. Netflix deploys sophisticated ensemble methods that combine multiple specialized models, each optimized for specific aspects of the recommendation problem. This repeatability compounds leverage across functions.

When you solve demand forecasting once with a disciplined architecture, you can apply the same architecture to inventory optimization, pricing, or resource allocation. You avoid rebuilding infrastructure for each new use case. This is the difference between scaling from three AI models to thirty models as an operational burden versus as a systematic expansion. The architecture itself becomes your competitive instrument-not the individual models or algorithms running within it.

This foundation of repeatable systems and measured leverage points now positions you to address the question that separates sustainable AI from temporary capability gains: how to maintain decision authority and control as you scale automated processes across your organization.

Ownership and Control in AI-Driven Operations

The moment you hand decision-making authority to an automated system, you surrender control unless you deliberately retain it. Most organizations fail here. They deploy AI models, watch accuracy metrics improve, then discover six months later that the system drifts silently or makes decisions that contradict business strategy because nobody defined what decisions the AI owns versus what remains human territory. The distinction matters operationally.

Decision Hierarchies That Preserve Authority

When Uber’s demand forecasting system operates, human dispatchers retain override authority. When the system recommends driver positioning that contradicts local market knowledge or creates unsafe conditions, dispatchers reject it. This override capacity keeps the system accountable and prevents automated logic from compounding mistakes at scale. Without it, you operate a black box that executes increasingly expensive errors undetected.

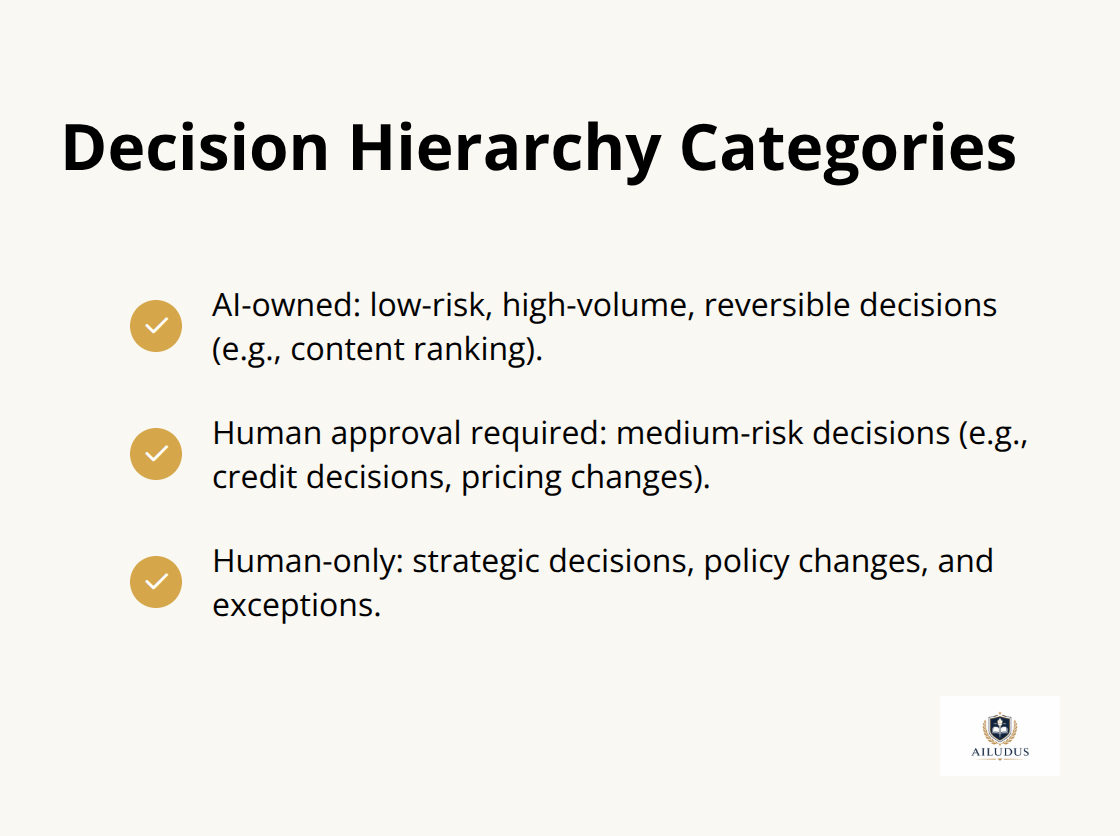

Establish decision hierarchies explicitly. Specify which decisions the AI owns entirely (low-risk, high-volume, reversible decisions like content ranking), which require human approval before execution (medium-risk decisions like credit decisions or pricing changes), and which remain human-only (strategic decisions, policy changes, exceptions). Document these boundaries.

When you scale from one AI model to thirty models across your organization, ambiguity about decision authority becomes expensive quickly. Teams conflict over who approves model outputs. Deployment slows. Accountability vanishes. The hierarchy prevents this by making ownership and approval paths mechanical and transparent.

Override Systems as Diagnostic Tools

Design override mechanisms that signal failure rather than hide it. Most organizations build override systems backward. They allow humans to override the AI whenever they disagree, turning automation into theater. The system executes most of the time, but overrides represent either human judgment that should feed back into model retraining or systematic failures the AI should detect itself.

Track metrics like override rate and escalation rate meticulously. If override rates exceed expected thresholds for a given decision class, the model is not ready for that decision. If override patterns cluster around specific conditions (certain customer segments, specific time periods, particular transaction types), the model has learned an incomplete pattern and needs retraining. Overrides are not exceptions. They are data that reveals where your automated logic breaks down.

Modular Architecture and Vendor Independence

Build modular architecture that prevents vendor dependency. This is where most organizations lose control. They adopt a comprehensive platform from a major vendor, integrate deeply, then face a choice years later: pay increasing licensing costs or rebuild everything from scratch.

Modular architecture means selecting best-of-breed components for specific functions rather than purchasing an integrated suite. Your data pipeline runs on one platform, your model training on another, your inference layer on a third. This approach requires more operational complexity initially, but it preserves your optionality. If a vendor raises prices, changes terms, or discontinues a product, you replace that component without dismantling your entire system. This flexibility is not theoretical.

When organizations evaluate infrastructure for AI workloads, the ability to mix environments without being locked into a single vendor’s ecosystem directly impacts your long-term cost structure and strategic flexibility. You maintain control over your technology stack and avoid the compounding costs that emerge when a single vendor controls your entire AI infrastructure.

Final Thoughts

Organizations that extract sustainable competitive advantage through AI treat it as a business instrument embedded within disciplined operating systems, not as a standalone capability to acquire and deploy. This distinction separates companies that generate compounding returns from those that experience temporary productivity spikes followed by stagnation. Structure precedes tools-when you reverse this sequence, you inherit the constraints of whatever platform you selected first, but when you establish your operating framework before selecting instruments, you maintain control over your architecture and preserve optionality as your needs evolve.

Sustainable leverage requires three elements that reinforce each other. Identify specific friction points where automated decision-making compresses time or eliminates information bottlenecks, structure data flows and decision hierarchies so outputs move directly to execution without intermediaries, and build modular architecture that prevents vendor dependency and allows you to replace components without dismantling your entire system. When these elements operate independently, you have isolated AI projects rather than integrated systems that compound value across your organization.

The long-term competitive advantage belongs to operators who treat AI infrastructure with the same rigor applied to production systems-version control, testing, monitoring, governance, and change management separate AI that decays from AI that compounds. We at Ailudus publish disciplined playbooks and operating frameworks designed specifically for builders navigating this transition from framework to execution.

— Published by Ailudus, the operating system for modern builders.