Most agencies treat AI as a cost-reduction tool. That’s backwards. The real leverage comes from building AI systems for clients that become part of your operating structure-systems that scale output without scaling headcount proportionally.

At Ailudus, we’ve seen agencies that integrate AI strategically gain both margin and defensibility. The ones that fail treat it as a technology problem instead of an operational one. This post outlines the frameworks that separate the two.

From Service Delivery to Systematic Output

The transition from labor-intensive client services to systematic output requires a structural shift, not just a tool swap. Most agencies operate on a billable-hour model where output scales linearly with headcount. AI disrupts this equation, but only if you architect it into your operations before you deploy it. The mistake is treating AI as something you bolt onto existing workflows. Instead, redesign the workflow around what AI can systematize, then layer in human judgment where it matters.

Recovering Capacity Through Automation

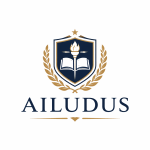

80% of respondents say they’d be more productive if they spent less time in meetings. For agencies, this translates directly to lost billable capacity and delayed client work. When you automate routine coordination, status updates, and reporting cycles, you recover that time and redirect it toward strategy and client outcomes. This is where the margin lives.

When you eliminate unnecessary meetings, you recover significant billable capacity. That’s not just efficiency; that’s structural profitability. The agencies winning with AI don’t reduce prices. They increase delivery frequency, expand service breadth, or improve output quality without hiring proportionally.

Data collection and organization consume about 3.55 hours per week per marketer. That’s roughly 185 hours annually per person spent on tasks that don’t generate client value. Automating those workflows doesn’t mean eliminating the marketer. It means redirecting their labor toward analysis, strategy, and client relationships where human judgment compounds.

Building AI Into Your Operating System

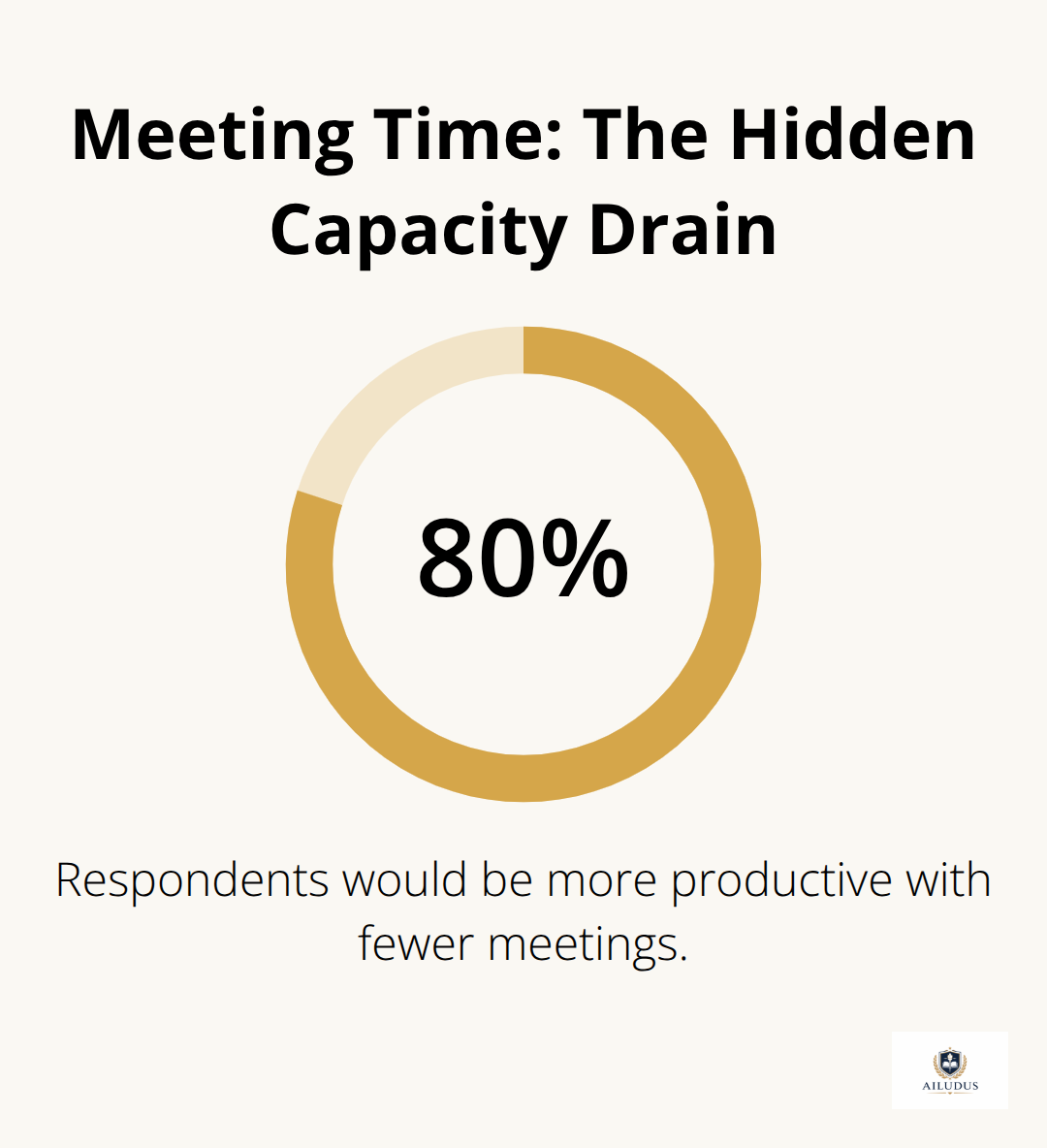

Positioning AI as infrastructure means building it into your operating system so it handles the mechanical parts of service delivery. Client onboarding, reporting, quality checks, task allocation, and performance monitoring are candidates for systematic AI integration. The discipline here is understanding which workflows benefit from automation and which require human discretion.

Automated reporting dashboards deliver consistent, reliable client updates on a fixed schedule, reducing the cognitive load of manual compilation. Your team then interprets the data and recommends actions rather than spending hours assembling reports. The second shift is structuring data flows so AI has clean inputs and clients receive outputs they can act on. This means standardizing how client data enters your systems, how you tag and organize it, and how you surface insights. Without this structure, AI amplification becomes AI noise.

Measuring System Performance

The third layer is building feedback loops that measure system performance. This isn’t about sentiment; it’s about concrete metrics. Track whether automated reports arrive on time, whether clients act on recommendations, whether quality metrics improve, and whether your team’s time allocation shifts toward higher-value work.

The agencies that scale successfully treat AI integration as an operational redesign problem. They identify which workflows to systematize, structure the data flows that feed those systems, and measure whether the automation actually frees capacity for higher-leverage work. This foundation determines whether AI becomes a margin driver or just another tool consuming budget.

Identifying Which Workflows Actually Justify Automation

Start With a Time Audit

The most common mistake agencies make is automating the wrong workflows. They target tasks that feel repetitive without measuring whether the automation actually frees capacity for higher-value work. Map your client onboarding and kickoff lifecycle over two weeks, logging how long each step takes and who performs it. You’re looking for workflows where the same sequence repeats across multiple clients with minimal variation.

Client onboarding, proposal assembly, data collection, and reporting cycles are typical candidates. But the real signal isn’t just repetition-it’s whether automating that workflow lets your team redirect time toward work that moves client outcomes or improves margins. If you automate a task that consumes two hours per week but nobody redirects that time, you’ve created efficiency theater, not leverage.

Surface Hidden Decision Rules Before Automating

Once you’ve identified candidates, structure the data flows before you deploy the automation. This means defining clean inputs: how client data enters your systems, what format it arrives in, and who validates it. Without standardization, AI systems produce garbage output from garbage input.

Many agencies fail here because they try to automate workflows that still depend on manual interpretation or undocumented decision rules. Undocumented knowledge leaves organizations with critical processes impacted. You need to surface those rules, codify them, and decide whether they can be systematized or whether they require human discretion. This step separates systems that compound from tools that create busy work.

Measure Output Quality Ruthlessly

Track whether automated reports arrive on schedule, whether recommendations clients act upon, and whether clients perceive the output as reliable. Net Promoter Score surveys work here, but follow up on scores below eight with direct calls. You need to know whether automation delivers value or just reduces friction for your team.

The agencies scaling successfully treat the data layer as infrastructure. They invest in clean integrations, standardized tagging, and documented decision logic before layering AI on top. This takes discipline and upfront work-but it’s what separates systems that compound from tools that create busy work.

Structure Data Flows as Your Foundation

Without clean data architecture, automation amplifies inconsistency rather than reliability. Define which systems feed client data into your workflows, how that data moves between tools, and what validation occurs at each handoff. Document the decision logic your team applies when data arrives incomplete or contradictory. Make explicit what was previously implicit.

This foundation determines whether AI becomes a margin driver or just another tool consuming budget. The next step is translating this operational clarity into client handoffs that actually work-where your systems and your clients’ systems exchange information reliably enough that automation produces value on both sides.

Where Agencies Stumble With Automation

The agencies that fail with AI automation don’t lack access to tools. They lack operational clarity before they deploy them. The pattern is consistent: teams identify a workflow, implement an AI system to handle it, and within weeks the automation produces unreliable output because the underlying process was never standardized. The cost isn’t just wasted software licenses. It’s eroded client trust and team credibility around automation itself. The discipline required is unglamorous: you must document and standardize your processes before you automate them, not after.

Most agencies rush to automation because they see the efficiency gains available to competitors. What they miss is that premature automation doesn’t just fail-it corrupts your operational data and client relationships simultaneously. If your proposal assembly process depends on undocumented templates, unstandardized client intake forms, and manual judgment calls about scope, automating that workflow won’t systematize it. It will amplify the inconsistency. Your clients receive proposals that contradict each other, your team spends time correcting AI-generated errors instead of redirecting capacity, and you’ve created a liability where you expected a lever.

Standardize Before You Automate

The fix is to reverse the sequence: standardize first, automate second. This means spending two to four weeks documenting how your proposal process actually works, identifying which decision points require human judgment and which you can codify, and testing those standards across five client engagements before you write a single line of automation logic. This feels slow. It produces compounding returns.

You surface the undocumented rules your team applies every day-the judgment calls about scope, the templates that vary by client type, the quality thresholds that shift based on context. Once you make these rules explicit, you can decide which ones belong in code and which ones require human discretion. The agencies that scale successfully treat this step as non-negotiable. They invest the upfront work because they know that automation amplifies whatever process sits beneath it.

Communicate System Changes to Clients

The second failure mode emerges when agencies automate without telling clients what changed. Clients notice that reports arrive at different times, recommendations shift in quality or consistency, or the output format changes without explanation. They don’t know whether to trust the new system. The agencies that scale communicate the change explicitly: they explain that reporting now runs on a fixed schedule powered by automated data aggregation, that quality checks happen systematically rather than manually, and that their team now interprets and acts on those outputs instead of assembling them.

This transparency converts potential friction into perceived reliability. Clients who understand the system trust it. Clients who suspect they’re being handed off to automation resent it. The difference is a conversation that takes thirty minutes and prevents months of erosion. You’re not hiding the automation-you’re positioning it as infrastructure that improves consistency and frees your team to focus on strategy and outcomes.

Treat AI Integration as an Operational Problem

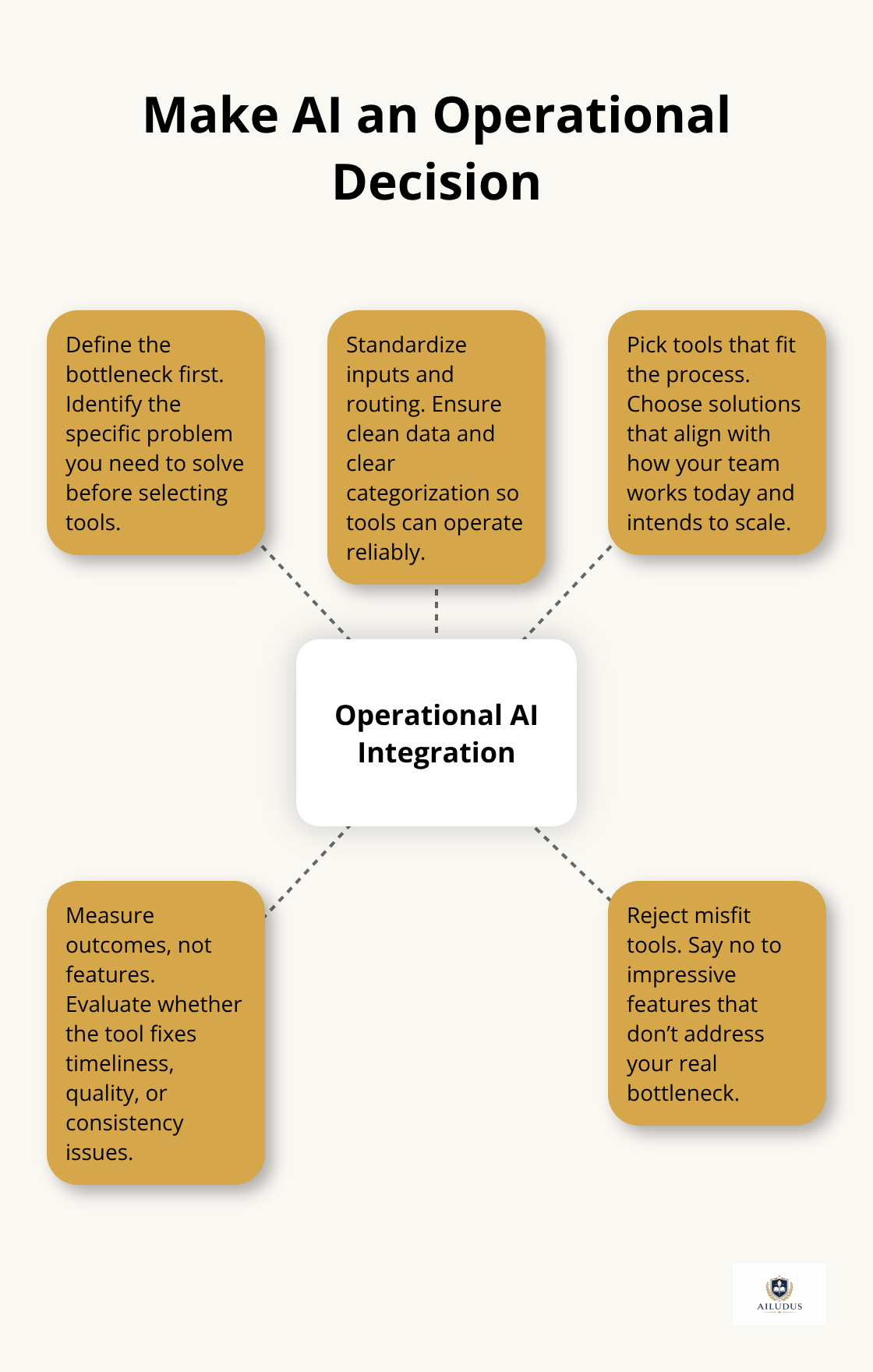

The third pitfall is treating AI integration as a technology decision when it’s fundamentally an operational one. Agencies select tools based on feature lists or integration breadth without first asking whether their internal processes can actually feed those tools clean data. They deploy chatbots without standardizing how client inquiries are categorized or routed. They implement automated quality checks without defining what quality metrics actually mean for their specific deliverables. The tool becomes the constraint instead of the solution.

The agencies that win reframe this entirely: they identify a specific operational problem-reports arriving late, client onboarding taking twelve days, quality variations across accounts-and then select tools that fit that problem. They measure whether the tool solves the operational problem, not whether it has impressive features. This means your technology decisions follow your process decisions, not the reverse. It also means you’ll reject tools that seem powerful but don’t address your actual bottleneck. That discipline is what separates agencies that scale margins from agencies that scale costs.

Final Thoughts

The agencies that scale margins build AI systems for clients that become structural advantages-infrastructure that compounds over time. This requires a fundamental shift in how you think about automation. It’s not about cutting costs. It’s about building systematic output that clients depend on and that your team can defend. When you position AI as operational infrastructure rather than a cost-reduction tool, the economics transform. Automated reporting redirects your analyst’s labor toward interpretation and strategy instead of assembly work. Systematic quality checks standardize the baseline so human judgment operates at a higher level. Client onboarding workflows that run on fixed schedules free your team to focus on outcomes instead of logistics.

The margin lives in this redirection of effort. You’re not doing more work with fewer people. You’re doing higher-value work with the same people. That’s defensible. That’s what clients pay for. The discipline required is unglamorous: you must document your processes before you automate them, communicate system changes to clients explicitly, and treat AI integration as an operational problem rather than a technology one. Most agencies skip these steps because they feel slow. The agencies that scale successfully treat them as non-negotiable.

When you build AI systems for clients that improve consistency, reliability, and output quality, you create dependencies that strengthen your position. Clients trust systems they understand. They value infrastructure that compounds. They renew contracts with agencies that deliver systematic improvement, not just labor. Start with a time audit, identify which workflows justify automation, standardize before you automate, and measure system performance ruthlessly. Explore Ailudus for frameworks and instruments that support this work.

— Published by Ailudus, the operating system for modern builders.